|

In short, this CNN is composed of the following 9 layers: 1) Convolutional layer with 30 feature maps of size 5×5, 2) Pooling layer taking the max over 2*2 patches, 3) Convolutional layer with 15 feature maps of size 3×3, 4) Pooling layer taking the max over 2*2 patches, 5) Dropout layer with a probability of 20%, 6) Flatten layer, 7) Fully connected layer with 128 neurons and rectifier activation, 8) Fully connected layer with 50 neurons and rectifier activation, 9) Output layer. The difference between learning rates was very small, with the best results using 0.001.įor the Convolutional Neural Network in Keras (using TensorFlow backend), we adapted the architecture from a tutorial by Jason Brownlee. We chose two layers of 100 nodes as a compromise between accuracy and time use (adding a second layer did not change the accuracy, but it reduced fitting time by half). We tried one or two layers of 784 nodes, which slightly increased accuracy at the cost of a much higher computing time. The default option is one layer of 100 nodes. The default option is 5, which we found to be optimal in this case.įor the Multi-Layer Perceptron (MLP), the structure of the hidden layer(s) is a major point to consider. In the K-Nearest Neighbor classifier, the central parameter is K, the number of neighbors. For this task, we found 100 trees to be a sufficient number, as there is hardly any increase in accuracy beyond that. Increasing this number usually achieves a higher accuracy, but computing time also increases significantly. The most important parameter for the Random Forest classifier is the number of trees, which is set to 10 by default in scikit-learn. Figure 2 : Accuracy scores for RF, KNN, MLP and CNN classifiers The KNN classifier came in second, followed by the MLP and Random Forest. As one might expect, the CNN had the highest accuracy by far (up to 96%), but it also required the longest computing time.

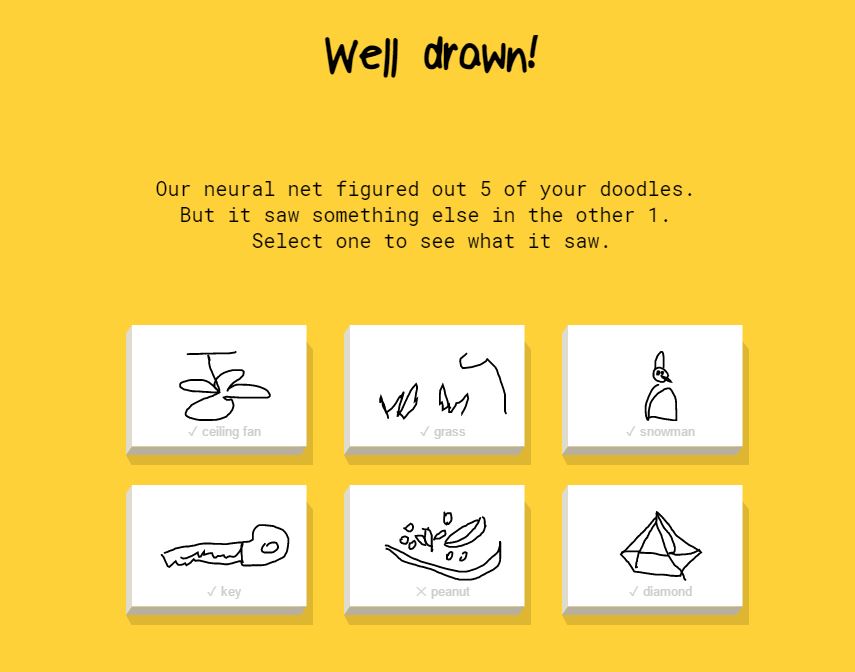

The performance of these algorithms on a test set is shown in Figure 2. We tested the Random Forest, K-Nearest Neighbors (KNN) and Multi-Layer Perceptron (MLP) classifiers in scikit-learn as well as a Convolutional Neural Network (CNN) in Keras. Figure 1 : Samples of the drawings made by users of the game “Quick, Draw!” While there are over 120,000 images per category available, we only used up to 7,500 per category, as training gets very time-consuming with an increasing number of samples. Figure 1 gives you an idea of what these drawings look like. We first tested different binary classification algorithms to distinguish cats from sheep, a task that shouldn’t be too difficult. Other datasets are available too, including for the original resolution (which varies depending on the device used), timestamps collected during the drawing process, and the country where the drawing was made.

We used a dataset that had already been preprocessed to a uniform 28x28 pixel image size. Here we will present the results without providing any code, but you can find our Python code on Github. With the project summarized below, we aimed to compare different machine learning algorithms from scikit-learn and Keras tasked with classifying drawings made in “Quick, Draw!”. Compared with digits, the variability within each category of the “Quick, Draw!” data is much bigger, as there are many more ways to draw a cat than to write the number 8, say.Ī Convolutional Neural Network in Keras Performs Best

By contrast, the MNIST dataset – also known as the “Hello World” of machine learning – includes no more than 70,000 handwritten digits. The dataset consists of 50 million drawings spanning 345 categories, including animals, vehicles, instruments and other items. Once the prediction is correct, you can't finish your drawing, which results in some drawings looking rather weird (e.g., animals missing limbs). In this game, you are told what to draw in less than 20 seconds while a neural network is predicting in real time what the drawing represents. Google uses this approach with the game “Quick, Draw!” to create the world’s largest doodling dataset, which has recently been made publicly available. One efficient approach for getting such data is to outsource the work to a large crowd of users. Image recognition has been a major challenge in machine learning, and working with large labelled datasets to train your algorithms can be time-consuming.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed